I traveled through airports and reported in sports stadiums this year. At each, I was asked to scan my face for security.

An AI security camera demo at an event in Las Vegas, Nevada.

(Bridget Bennett / Getty Images)

In the fall, my partner and I took two cross-country flights in quick succession. The potential dangers of flying, exacerbated by a few high-profile plane crashes earlier in the year, seemed to subside in the national consciousness. There were other tragedies and failures to worry about. Still, the ordeal of flying necessitates the ordeal of passing through airport security, one of the United States’s most glaring, and frustrating, post-9/11 bureaucratic slogs.

By the time I was old enough to fly as an unaccompanied minor in 2003, the irrevocability of the TSA, much like other government acronyms (FBI, CIA, DHS), had become so firmly established as to seem eternal. I remember, in 2006, when it was announced, after a liquid bomb threat in London, that liquids in bags would be restricted to the size of a 3.4 ounce container and shoe removal would become mandatory. I remember the beginning of TSA PreCheck, and the implementation of full body scanners. What I don’t remember is when exactly we started to be asked to scan our faces in order to get past the security line.

On our first fall trip, my partner and I just happened to be flying on 9/11. “Happened to” is inaccurate; we chose to fly on that date given how, according to our logic, the lingering superstition of plane hijackings would result in fewer people buying plane tickets, and thus presumably shorter security lines and less crowded flights. Maybe in previous years this would have been the case. On this year’s 9/11, there were as many travelers as there had ever seemed to be.

In front of us, a man made his way to the TSA agent at the security checkpoint. The agent asked for the man’s ID then motioned for him to stand in front of a camera, which was embedded in a small screen that displayed a cutout where his face would be captured. Instead, the man requested that his photograph not be taken, an option I knew to be technically available but one I had never seen a traveler actually make use of. Most people, including myself, have simply acquiesced to the new format: The screen stresses that a passenger may opt out by advising “the officer if you do not want your photo taken,” but also emphasizes that the picture, once shot, is immediately deleted. Little reporting has been done about whether this is true—if the photo is truly deleted and in what circumstances it would be stored. All official info comes from the TSA, which has said it keeps photos “in rare instances.” As with so many technologies that are used for surveillance but are currently optional, there’s a pressure to simply give in. It takes a few seconds. Why not?

This logic has always troubled me and, until this stranger modeled how simple it was to say no, I had assumed that given the way airport security typically functions, especially given the Trump administration’s blackbagging of suspected criminals and migrants off city streets in broad daylight, and the invasion of privacy by police and other law enforcement agencies, that opting out would only make the process slower and more bureaucratic than it already is. But after the man in front of me opted out, the TSA agent just asked for his boarding pass, scanned it, and moved on. My partner and I followed suit. Until the rules inevitably change, we may never opt in again.

Security checkpoints have always been fraught for me. I had never thought to worry about increased scrutiny based on my ethnicity until I was in my teens, when it became impossible to ignore how often I’d be pulled aside for additional screening at sporting events, in subways, and, most often, in airports.It wasn’t a technology enforcing that bias—it was other people. Traveling in public feels more tenuous now, adding technology onto already existing human error.

Facial recognition, by no means a new concept, still has the valence of a far-off technology, one whose use is better in theory than in practice. In 2017, certain airlines like JetBlue, in collaboration with Customs and Border Protection, began trial runs of a new system that allowed passengers to choose to scan their face instead of their boarding passes. Immediately, concerns over privacy were raised, but the upside, according to airline executives, was efficiency and enhanced security. A quote by Benjamin Franklin comes to mind, often used when discussing privacy concerns, though its original context is more prosaic: “Those who would give up essential liberty to purchase a little temporary safety deserve neither liberty nor safety.” Franklin was referring to a taxation dispute involving the Pennsylvania General Assembly, not invasive technology. Nonetheless, the sentiment in this context offers a productive perspective to engage with, the urgent awareness of encroaching potential civil rights infringements and voluntary abdications of privacy.

It turns out there’s a term for this, “mission creep,” or, per Merriam-Webster, “the gradual broadening of the original objectives of a mission or organization.” I’m compelled by the Cambridge Dictionary’s addition to the definition, “so that the original purpose or idea begins to be lost.”

Dreams of technological advancement are really fantasies of convenience. Tech entrepreneurs whose delusions the general public are forced to witness come to fruition, frame the future in negative and/or substitutional terms, swapping out the supposedly cumbersome and analog in favor of the stripped down, digital, and efficient. Take Elon Musk and DOGE, or Meta and its heavy investment in wearable augmented-reality technology. Soon, we’ll no longer have to X, the tech capitalist mindset goes. Wouldn’t it be amazing if you could just Y?

One of the technological focuses of the past several years has been speed: reducing wait times, shortening lines, erasing friction in daily public life wherever possible. To enact this, clunky old systems must be eliminated in favor of newer, sleeker models. Such upgrades, whether to airport security, public school monitoring systems, personal surveillance software, or federal housing, are never described as anything other than a kind of refresh. But really, whenever there’s a give, there’s always a take.

In May, Milwaukee’s police department, infamous across the world for the murder of George Floyd in 2020, announced that it was considering trading over 2 million mugshots in its database to the tech company Biometrica for free use of its facial recognition program. Pushback from local officials and citizens ensued, with the police department responding that it was taking public concerns seriously while still considering the offer. The deal would involve Biometrica receiving data from the police department in exchange for software. Alan Rubel, an associate professor at the University of Wisconsin–Madison studying public health surveillance and privacy, spoke to WPR about the issue and drew attention to the offer’s language, saying a trade rather than buying the data would be “very useful for that company. We’ve collected this data as part of a public investment, in mugshots and the criminal justice system, but now all that effort is going to go to training an AI system.”

Popular

“swipe left below to view more authors”Swipe →

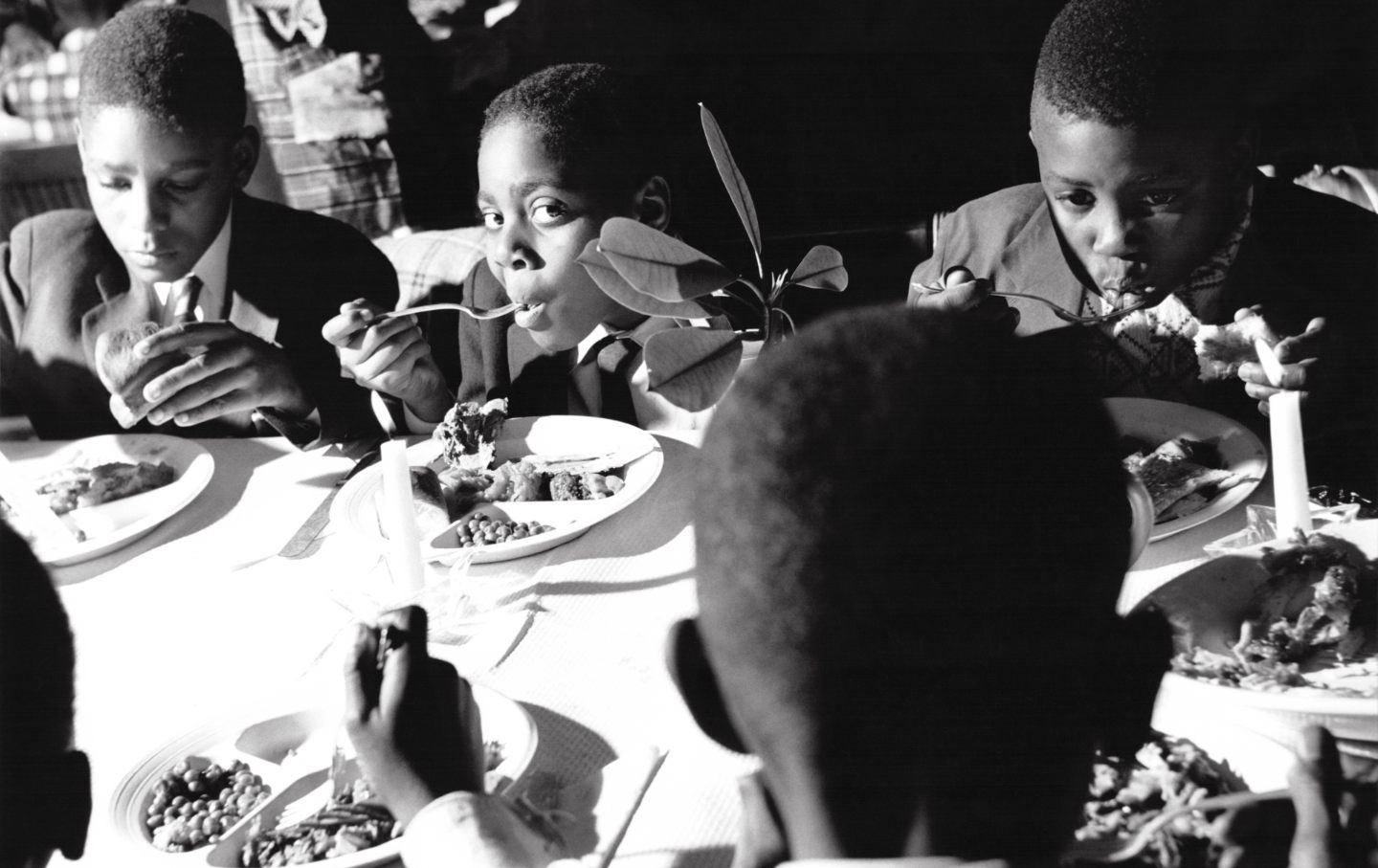

Disproportionately represented races in the American criminal justice system can only mean disproportionate bias in an AI system trained to recognize certain kinds of faces. Those with records, and those without; legal and undocumented immigrants. It’s difficult, in these scenarios, not to parrot the same othering language as the state, to enforce a divide between “us” and “them.” I imagine this is a subtle knock-on effect of the normalization of these procedures and these surveillance technologies, the constant separation between good citizens and bad. Indeed, as Trump’s crackdown on immigrants continues to ensnare brown people regardless of legal status, ICE agents have employed facial recognition tools on their cellphones to identify people on the street, scanning faces and irises to both gather data and compare photographs to various troves of location-based information.

Lost in this anxiety over the potential use of biometric data by private companies and federal agencies is how exactly that data, whether a retina scan or fingerprint, is verified. Capturing these various pieces of information for the sake of surveillance is useless without a database to measure against. Of course, as with the TSA, almost all of these tech companies are working in tandem with various branches of the government to check pictures and prints against passport and Social Security information. For now, much of this data is separated between different agencies rather than kept in a single, unified database. But AI evangelists like tech billionaire Larry Ellison, cofounder of Oracle, envision a world where governments house all their private data in one server for the purposes of empowering AI to cut through red tape. In February, speaking via video call at the World Governments Summit in Dubai to former UK prime minister Tony Blair, Ellison urged any country who hopes to take advantage of “incredible” AI models to “unify all of their data so it can be used and consumed by the AI model. You have to take all of your healthcare data, your diagnostic data, your electronic health records, your genomic data.”

Ellison’s comments at similar gatherings point to his assumption that, with the proliferation of AI in every digital apparatus, a sort of beneficent panopticon will emerge, with AI used as a check. “Citizens will be on their best behavior because we’re constantly watching and recording everything,” Ellison said at Oracle’s financial analyst meeting last September. How exactly this will result in anything like justice isn’t specified. Rampant surveillance is already being utilized by law enforcement in “troubled” areas with low-income residents, high concentrations of black and Latino workers, and little local municipal investment. Police helicopters frequently patrol the area where I live in Las Vegas, Nevada, flying low enough to shake our windows and drown out all other sounds.

In the last year, Vegas’s metropolitan police department set up a mobile audiovisual monitoring station in a shopping center down the street from me. It’s a neighborhood that has been steadily hollowed out by rising home prices, a mix of well-off white retirees and working-class black laborers with long commutes into the city. Periodically, an automated announcement plays assuring passersby that they are being recorded for their own safety.

While reporting at Las Vegas’s Sphere this past summer, I waited in line for a show among a throng of tourists and noticed, prior to passing through a security checkpoint, large screens proclaiming that facial recognition was being used “to improve your experience, to help ensure the safety and security of our venue and our guests, and to enforce our venue policies.” A link directing visitors to Sphere’s privacy policy website was displayed below the notice, but this policy makes no direct mention of “facial recognition.” Instead, it outlines the seemingly incidental collection and use of “biometric information” writ large captured while one is at Sphere, or any of the properties owned by the Madison Square Garden Family. The disclosure of this information can be shared with essentially any third-party MSG Family deems legitimate. A visitor agrees to this once they’ve made use of any of MSG Family’s services, or seemingly just stepping onto their property. The company has already gotten in trouble for abusing this technology. In 2022, The New York Times reported on an instance of MSG Entertainment’s banning a lawyer involved in litigation against the company from Radio City Music Hall, which MSG Entertainment owns. The company also used facial recognition to enforce an “exclusion list,” which included other individuals MSG had contentious business relations with. Per Wired, “Other lawyers banned from MSG Entertainment properties, including Madison Square Garden, sued the company over its use of facial recognition, but the case was ultimately dismissed.”

What is so nebulous and nefarious here is the increasing inability for ordinary people to opt out of these services, marshaled in the name of security and convenience, where the question of how exactly our biometric data is used can’t be readily answered. By now, most people know their data is sold to third-party companies for the purposes of, say, targeted advertising. While lucrative, advertising is becoming a lower-tier use. The ACLU has drawn attention to the increased use of facial recognition in public venues like sports stadiums which, similar to the TSA, are said to be implemented for the public’s benefit and protection. Utilizing such technology to control entry is a huge step in the wrong direction. But adding facial recognition to the process isn’t a matter of shoring up competence. Instead, it allows for the normalization of surveillance.

Bipartisan anxiety and inquiry around this topic, specifically facial recognition in airports, has been met with opposition from airlines, who claim that these methods make for a “seamless and secure” travel experience. But companies at the forefront of the push to integrate biometric capture into the fabric of daily life are exploiting temporary gaps in regulation, choosing self-limitation and textual vagaries. There’s a sense these companies are merely waiting to see how the federal government will or won’t enforce guidelines around facial recognition. According to a 2024 government report, “There are currently no federal laws or regulations that expressly authorize or limit FRT use by the federal government.”

A familiar fear arises here, once ambient but growing more visceral and palpable with each right-wing provocation against civil rights and personal autonomy. That the dreams of the Trump administration dovetail with Silicon Valley’s warped vision of the future only exacerbates a tenuous state of affairs. We have been passing through a steadily changing surveillance landscape, the tools of which are becoming more difficult to ignore. The perennial question remains: What are we willing to trade for convenience and the illusion of security?

For starters, the public must be wary and violations of data privacy need to dwindle. Small instances of refusal, like not having your photo taken by the TSA, likely won’t snowball into radical acts—the systems in which these technologies are enmeshed are already massive and entrenched—but they are still some of the few chances the public has to choose when their data is taken. The desire for privacy, rather than an admission of guilt as Republicans like to imply, is a precious thing. It’s worth taking a few minutes and some minor irritation to preserve it.

More from The Nation

So far, the Golden Dome seems more like a marketing concept designed to enrich arms contractors and burnish Trump’s image rather than a carefully thought-out defense program.

In Martin County, the government shutdown and attacks on food stamps have exposed Donald Trump’s empty promises. To many, that makes him just another politician.